Fractional Database & Architecture Engineering Services

ETL Pipelines - Data Migrations - RDBMS Optimizations - Upgrades - Modernizations - Disaster Recovery - High Availability

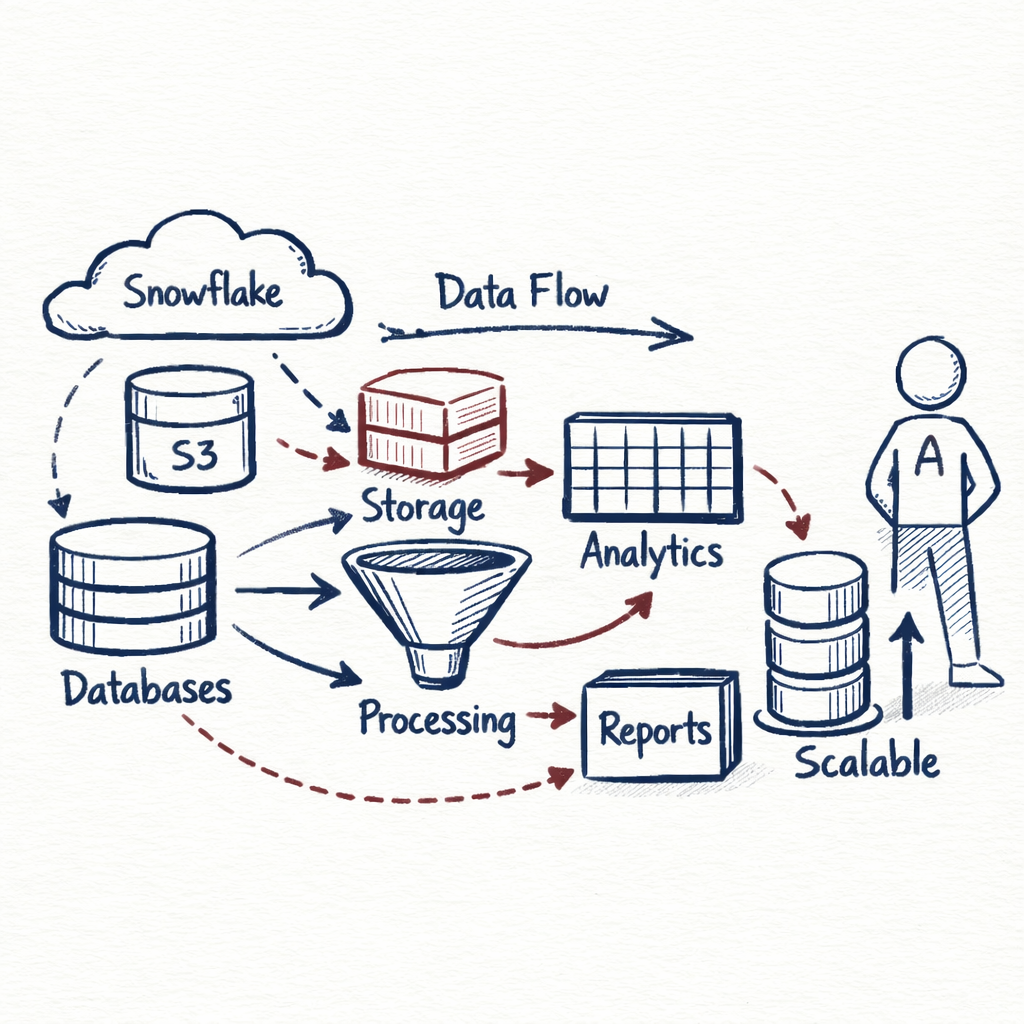

Data Platforms & Architecture

I design data platforms to support analytics, reporting, and downstream systems.

This includes structuring data for efficient querying, organizing storage in systems such as Snowflake or S3-based environments, and designing data flows that scale with increasing data volume and complexity.

Data Quality & Validation

My work in data quality focuses on identifying and resolving issues across pipelines and source systems.

This includes implementing validation logic, detecting anomalies, and enforcing data consistency before data reaches downstream systems and reporting layers.

Data quality metrics are captured and surfaced through dashboards, providing visibility into data integrity over time. Large-scale assessments are performed using PySpark to analyze and validate high-volume datasets efficiently.

Experience supporting data governance initiatives, including master data management, data standardization, and validation workflows using platforms such as Semarchy xDM.

Work in this area focuses on improving data consistency, establishing clear data definitions, and supporting processes that maintain data quality across systems.

Data Governance & Master Data Management

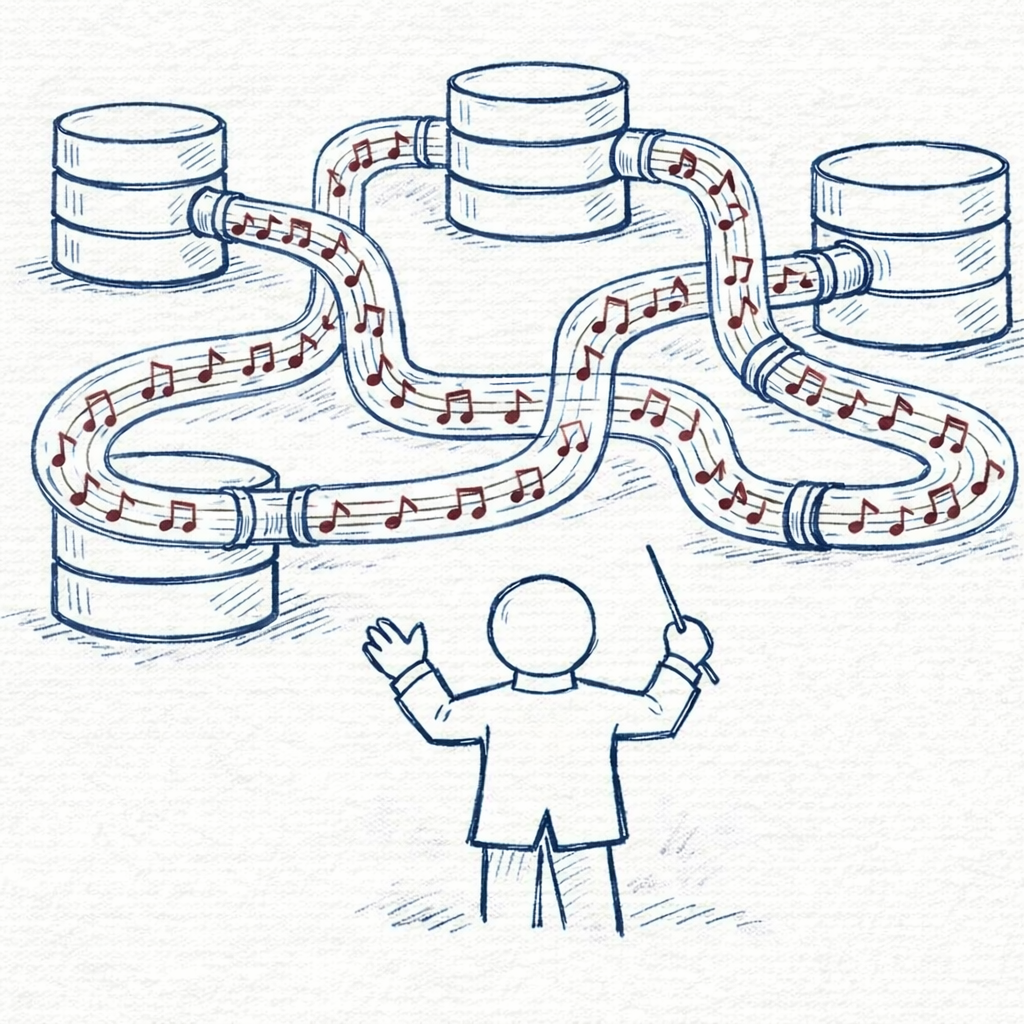

Data Integration & Pipeline Development

I develop data processing pipelines using Python, Apache Spark/PySpark, and dbt. For process orchestration, I utilize AWS Glue or Airflow. I am experienced in integrating a variety of sources, including AWS S3, REST API's, and all major relational databases.

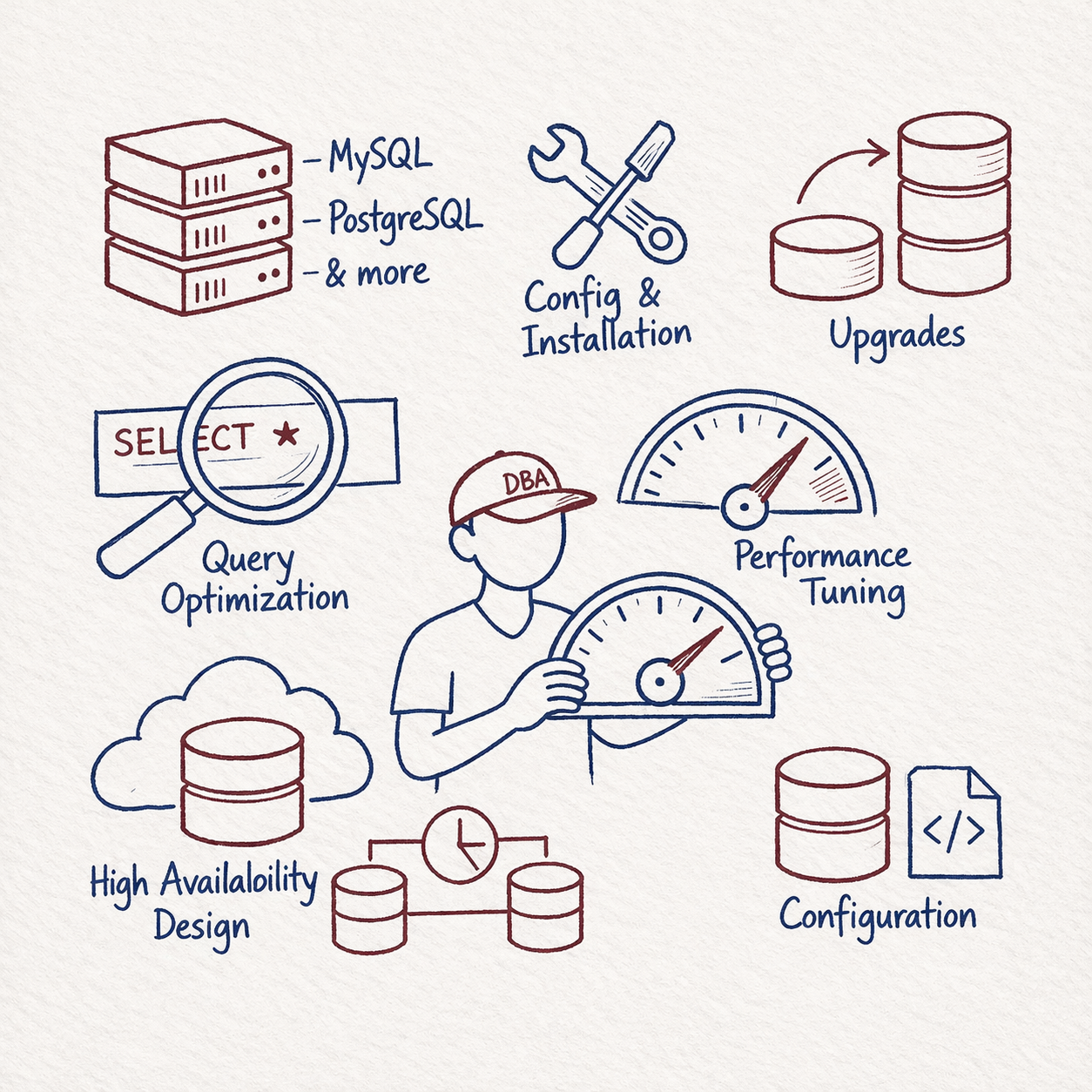

Database Systems & Performance

I provide support for underlying database systems, including installation and configuration, upgrades, performance tuning, query optimization, and high availability design for MySQL, PostgreSQL, and related platforms.

Monitoring & Observability

Implementation of monitoring and alerting for data pipelines and database systems using tools such as AWS CloudWatch, Performance Insights, and Percona Monitoring & Management.

Monitoring is designed to provide visibility into pipeline execution, system performance, and data quality, including notifications when measured metrics exceed defined thresholds.